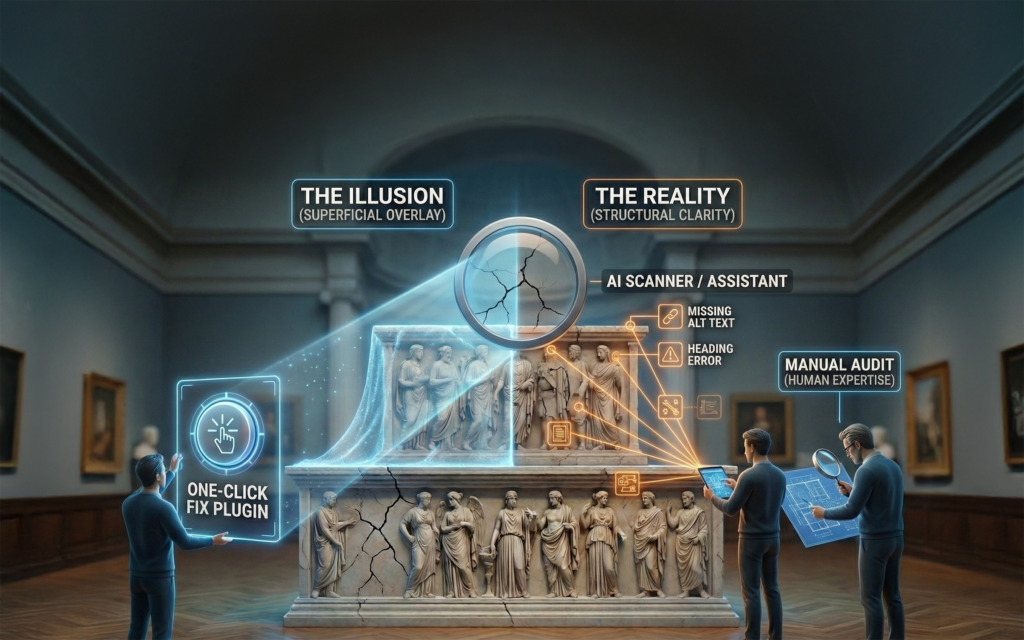

In part one in our series on UX testing in the museum environment, we showed why traditional A/B testing rarely works for museum websites. Part two showed how to wire a lean analytics stack that respects limited traffic yet records every important interaction.

Now it’s time to translate those numbers into human insight. This instalment dives into the qualitative side of evidence-based UX: structured heuristic evaluations that surface usability and accessibility flaws before launch, rotating heatmaps and session recordings that reveal real-world behavior, and small-sample usability tests that expose stumbling blocks long before you reach statistical significance. By blending these methods with the hard data you’re already collecting, your team will see not just what visitors do, but why, which is the missing link between measurement and meaningful improvement.

When quantitative data is sparse, qualitative UX tools like heatmaps and session recordings become extremely valuable. They allow you to see how users interact with your site, even if you don’t have big numbers. One popular tool in this space is Hotjar (along with similar services like Crazy Egg or Microsoft Clarity). Here’s how such tools can help a museum website and why they’re ideal for low-traffic environments:

- Heatmaps – Visualizing User Attention: Heatmaps aggregate user interactions visually. For example, a click heatmap will show where users tend to click on a page (with “hot” colors indicating frequent clicks), and a scroll heatmap shows how far down the page most users scroll. Even with a small number of users, a heatmap can quickly reveal obvious UX issues. Imagine your museum’s homepage heatmap shows a lot of clicks on an image that isn’t actually clickable because users think it’s a button or link. That’s a strong signal to make that image clickable or change its appearance. Or a scroll map might show that only 30% of visitors scrolled to the bottom of your “Visit Us” page, which is problematic if your important admission info is at the bottom. These insights don’t require statistical significance; they are observational truths. If 10 out of 10 people clicked an unrelated element thinking it was a link, you’ve discovered a UX confusion to fix. Heatmaps give you the “big picture” of how users engage with a page at a glance, often surfacing issues that analytics numbers alone can’t (like an FAQ accordion that nobody notices because it’s too far down).

- Session Recordings – Watching Real User Journeys: Session recording tools (like Hotjar’s Recordings feature) let you watch anonymized replays of actual visitors using your site, you see their mouse movements, clicks, taps, and scrolling, almost like looking over their shoulder. This can be incredibly insightful, especially if you only get a few dozen visitors a day. By watching even a handful of sessions, you may spot where people get frustrated or confused. For instance, you might observe a user repeatedly clicking a “Donate” button that doesn’t appear to respond (maybe because a form field was missing and the page didn’t show an obvious error). Or you might see a user struggling to navigate the calendar of events, giving up after a few attempts. These real-world interactions can highlight pain points that you would never know about from aggregate data. The key here is that recordings provide unbiased evidence of user behavior, often catching issues that users themselves might not report.

- User Feedback Polls: Tools like Hotjar also let you deploy on-site surveys or feedback widgets (e.g., a little pop-up asking “Was this page helpful? Yes/No” or a poll asking “What information were you looking for today?”). In a low-traffic setting, you won’t get tons of responses, but the ones you do get can be enlightening. For example, if even 5 people respond to a poll on your exhibitions page and 3 of them mention they wanted to find upcoming events, it suggests your navigation or content might not be aligning with visitor expectations (perhaps an “Events” section needs to be more prominent). Qualitative comments like “I can’t find parking information” or “the registration form is too long” directly point to areas for improvement. Use these feedback tools sparingly on key pages so as not to annoy visitors, but do take advantage of them – each response from a real visitor is valuable insight when you don’t have large numbers.

- Implement on a Rotating Basis: One practical tip for low-traffic sites is to rotate what you measure with heatmaps/recordings. Instead of trying to record every page (and getting a trickle of data on each), focus on one section at a time. For instance, run a heatmap on your homepage for a month to gather enough interactions to spot trends. Next month, switch the heatmap to your “Plan Your Visit” page, and so on. Over a few months, you’ll have collected meaningful interaction data on all your important pages. The same goes for session recordings: you might configure Hotjar to record sessions on specific pages or flows (like the membership sign-up process) for a period. By concentrating the tool’s attention, you ensure you get a critical mass of observations on each target area. This rotating approach is like doing a series of mini usability studies page-by-page. It’s cost-effective and works around the low traffic by being patient and systematic.

Hotjar and similar tools often have free or affordable plans that cover a few thousand pageviews, perfect for a small museum site. They are an “optional enhancement” to your analytics toolkit, not a replacement for it. The combination of GA + Hotjar is powerful: GA tells you what is happening in numbers, Hotjar helps explain why by showing user behavior and hearing user voices. For a mixed-skills team, these visual and narrative insights can be more persuasive than statistical graphs. It’s one thing to tell colleagues “only 10% of users complete the tour booking form,” but quite another to show them a recording where a user struggles through five failed attempts at that form, the issue becomes crystal clear and urgent. Use these tools to understand the user and to validate changes (e.g., after a fix, you can watch new recordings to ensure people sail through the process without frustration). Just remember to respect privacy and ethical guidelines. Never use recordings or heatmaps to collect personal data, and always anonymize feedback.

The Role and Limits of Focus Groups in UX Testing

Museums often use focus groups or committee discussions to gather input on exhibitions and programs. It’s natural to think the same method could help with website UX. For example, getting a group of visitors in a room to talk about the website or preview a new design. Focus groups can yield some insights, but it’s important to recognize their limitations for UX testing and how to properly frame any feedback you get:

- Not a Substitute for Usability Testing: Focus groups are qualitative, attitudinal research, they gather opinions, impressions, and recollections. Therese Fessenden, from the Nielsen Norman Group, explains why what they don’t provide is direct observation of user behavior on your website. In a focus group, people might comment on a design shown to them or discuss what they think they would do, but this is very different from watching them actually try to accomplish tasks on the live site. As Nielsen Norman article points out, focus groups “cannot provide any objective information on behavior when using a product or service” and they lack the detailed usability insights you’d get from one-on-one user testing. In practical terms, a focus group might tell you “users think the homepage looks cluttered,” but it won’t tell you that “users could not find the mailing list sign-up on the homepage.” The latter comes from actual usage observation. So, do not rely on focus groups alone to evaluate usability. If possible, complement them with direct usability tests (even informal ones).

- People Don’t Always Do What They Say: Another well-documented issue is that stated preferences often differ from actual behavior. In a focus group, participants might earnestly say “Yes, I would definitely use an interactive map feature” or “I never watch videos on museum websites.” But when you look at real usage data, it may not match these intentions. Users aren’t trying to mislead; it’s just hard for anyone to accurately predict their own future behavior or to recall past behavior accurately. As researchers say, users do not always do what they say they will do. For example, a focus group could unanimously agree that a virtual tour feature would be fantastic, but after you invest in it, it turns out nobody actually uses it when it’s live. This doesn’t mean you ignore what people say; just treat it as subjective feedback, not gospel. When a focus group expresses a desire or dislike, use that as a hypothesis and try to verify it with real-world data or testing if possible.

- Group Dynamics and Bias: Focus groups can sometimes amplify certain voices or opinions that aren’t truly representative. One or two outspoken participants can sway the discussion, leading to a kind of groupthink. There are also biases like negativity bias (participants might spend more time talking about what they didn’t like, overshadowing things they found fine). In a museum context, imagine a focus group where a couple of tech-savvy members dominate, insisting the site needs more interactive bells and whistles, while quieter members (who may represent the average visitor) actually find the current site fine. The risk is the loudest opinion in the room gets mistaken for a majority opinion. To mitigate this, ensure a skilled facilitator manages the session to involve everyone, and consider doing multiple small groups to get a range of views if you have the resources.

- How to Frame Qualitative Insights: When you do gather qualitative feedback, whether via focus groups, interviews, or open-ended survey responses, frame it as exploratory insight, not final validation. Look for common themes or pain points mentioned across participants. For example, if several people in a focus group mention that the online collections search is confusing, that’s a strong signal to investigate that feature. You might then do a targeted usability test or check analytics for that section to see if users indeed struggle (perhaps high search exits or zero-result searches). Use focus group findings to generate hypotheses (“Maybe our navigation labels are not clear to users”), which you can then test or examine further. Also, focus on the why behind comments. If someone says “I don’t like the website’s layout,” probe deeper. Is it because they couldn’t find what they needed? Because the design felt outdated? Their reasoning will guide you to specific issues (navigation, visual design, content clarity, etc.).

- Educate Stakeholders about Focus Group Limits: Since museum teams are cross-functional, not everyone may be versed in UX research nuances. It’s worth gently educating colleagues or leadership that while focus groups can provide valuable feedback and ideas, they are not a reliable way to measure usability or success. The risk is that an enthusiastic focus group response (positive or negative) could lead to overconfidence in a decision. For instance, “Our focus group loved the new homepage design, so let’s launch it as-is,” that could be dangerous if the design hasn’t been tested for actual usability. Balance focus group input with other research. You might say, “The focus group gave us good suggestions for content emphasis, and now we’ll run a quick usability test to see if people can navigate the new design effectively.” By combining methods, you get the benefits of both. Remember that focus groups are best for broad direction and ideas (e.g., what kind of content people value, how they feel about the museum’s messaging) and not for evaluating interaction details.

Focus groups can be one tool in your toolkit, but handle them with care. They shine in understanding user attitudes, for instance, what local community members wish the museum site would offer, which can inspire features or content that you then validate through other means. They falter when used to assess how easy something is to use or to predict exact behavior. So take qualitative feedback as inspiration and insight, then back it up with observational evidence whenever possible.

By layering heuristic evaluations, five-user tests, and a rotating roster of heatmaps and session recordings onto your clean GA4 dataset, you now have a 360-degree view of your visitors: the numbers show where friction lives, and the stories explain why it happens. Insight, however, is only valuable when it shapes what you launch next. In our final instalment we’ll take everything you’ve learned—focused test charters, lean instrumentation, and qualitative findings—and show how to apply it during a redesign, from zero-traffic prototypes to post-launch cohort tracking, all within a flexible site architecture that lets you iterate without waiting for the next rebuild.